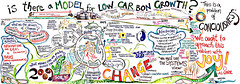

Image via Wikipedia

The essence of good knowledge work is uniqueness not uniformity. The ideal knowledge work product is exactly what your client asked for and could only have been created by you. The challenge is that we have been conditioned by the industrial economy to value uniformity and see uniqueness as undesirable variation instead of the essential quality it has become.

To deliver better knowledge work products, we need to unlearn habits and develop new perspectives. Our inappropriate habits stem from assumptions about industrial work. With industrial thinking, once you ve created a new product the goal becomes how to replicate it predictably. You specify the characteristics of the output precisely, lock down the process, or, ideally, do both. That works if you need to manufacture cars or calculate every employee s pay stub correctly. It doesn t when the goal is to create the new product. The primary challenge here is to shift focus away from the issue of replication and toward creation. The question becomes how do we manage to create this? instead of how do we produce the same thing all over again?

Creating good knowledge work has a challenge beyond simple uniqueness. It is the same challenge faced by artists who once worked at the pleasure of their patrons. You want to create your art, but ultimately, you have to please your patron as well. You need to educate your patron about the nature and qualities of the output you have been commissioned to create and you need to learn from your patron what limits and constraints they face in putting that output to work. The unique insights where value resides may need to be packaged in a document or presentation package comfortable to the organization. Or you may need to place those insights in a more familiar context that lets them be seen by the organization.

Working out a feasible balance between the unique and the familiar is more complex and more subtle than either simply delivering what the customer asks for or creating an off-the-shelf product. It is not precisely a negotiation, nor is it generally a full-bore collaboration.

A basic strategy for discovering an appropriate knowledge work output has three parts.

1. Defining a deliverable.

If you ve grown up in a professional services environment, what s the deliverable? gets tattooed on the inside of your eyeballs. It s essential in professional services because it creates something tangible for which you can get paid. In any form of knowledge work the notion is valuable in that it makes something tangible out of what can otherwise be an amorphous process. A deliverable might be a spreadsheet or a presentation, an executive workshop, or a first release of a new information system; regardless, it needs to be something that both you and your client can point to and decide that it is done.

2. Understanding how the deliverable will be used.

The most important thing to understand about a deliverable is what your customer intends to do with it. Will it be used to identify a decision to be made? Justify that decision to someone higher in the food chain? Generate or eliminate alternatives?

Each of these possible uses influences what is important about the deliverable and what is secondary or irrelevant. For example, if your customer wants to understand whether a particular technology does or doesn t work in their current environment, a lengthy analysis of the current state of the software market isn t likely to be relevant.

That same analysis may be the essential requirement of someone evaluating whether to introduce their technology to the market. Whether you invest in preparing the analysis depends first and foremost on whether someone needs it.

3. Determining how the deliverable can be created.

Understanding how a deliverable will be used sets limits and focuses your attention on how it should be created. An executive who needs an answer about whether to invest in a new distribution channel may prefer that answer delivered on a one-page memo. However, if your customer is that executive s support staff, you may need to create substantial supporting analyses. Even in that case, whether you deliver that supporting analysis as a bound report, an electronic document, or organized as a series of frequently asked questions will affect both its value to the customer and its cost (to you) to create.

This three-step process should be followed for every piece of knowledge work we create. The urge to standardize outputs or processes is a holdover from industrial practices that is inappropriate in a knowledge work environment. Applied here, it risks short-circuiting the essential interaction. We must define and deliver the unique output that integrates client needs with your ability to create an appropriately unique solution.

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=84fe7ac4-f72a-4e23-857a-e0939615e6fb)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=b2dd0bce-dabf-4ec8-8f08-7145ef86e397)